Over the past year, most teams have figured out how to integrate LLMs. The harder part begins after that.

- How do you manage multiple agents across teams?

- How do you prevent accidental configuration changes?

- How do you control model spend when routing across providers?

- How do you standardize common AI workflows without rewriting everything from scratch?

Recent FloTorch releases focus on these practical challenges, emphasizing how to operate AI systems in shared production environments rather than simply experiment with them.

Governance That Matches Real Team Structures

When multiple teams work within the same AI platform, boundaries are not optional. They are required.

FloTorch strengthens role-based access control across organizational and workspace levels. The platform supports clear role separation between Organization Owner, Organization Admin, Workspace Admin, Workspace Developer, and Workspace Member.

Permissions are enforced at the API level, not just in the UI. Actions are validated consistently whether initiated through the console or SDK, eliminating ambiguity about who can create, modify, archive, or permanently delete production resources.

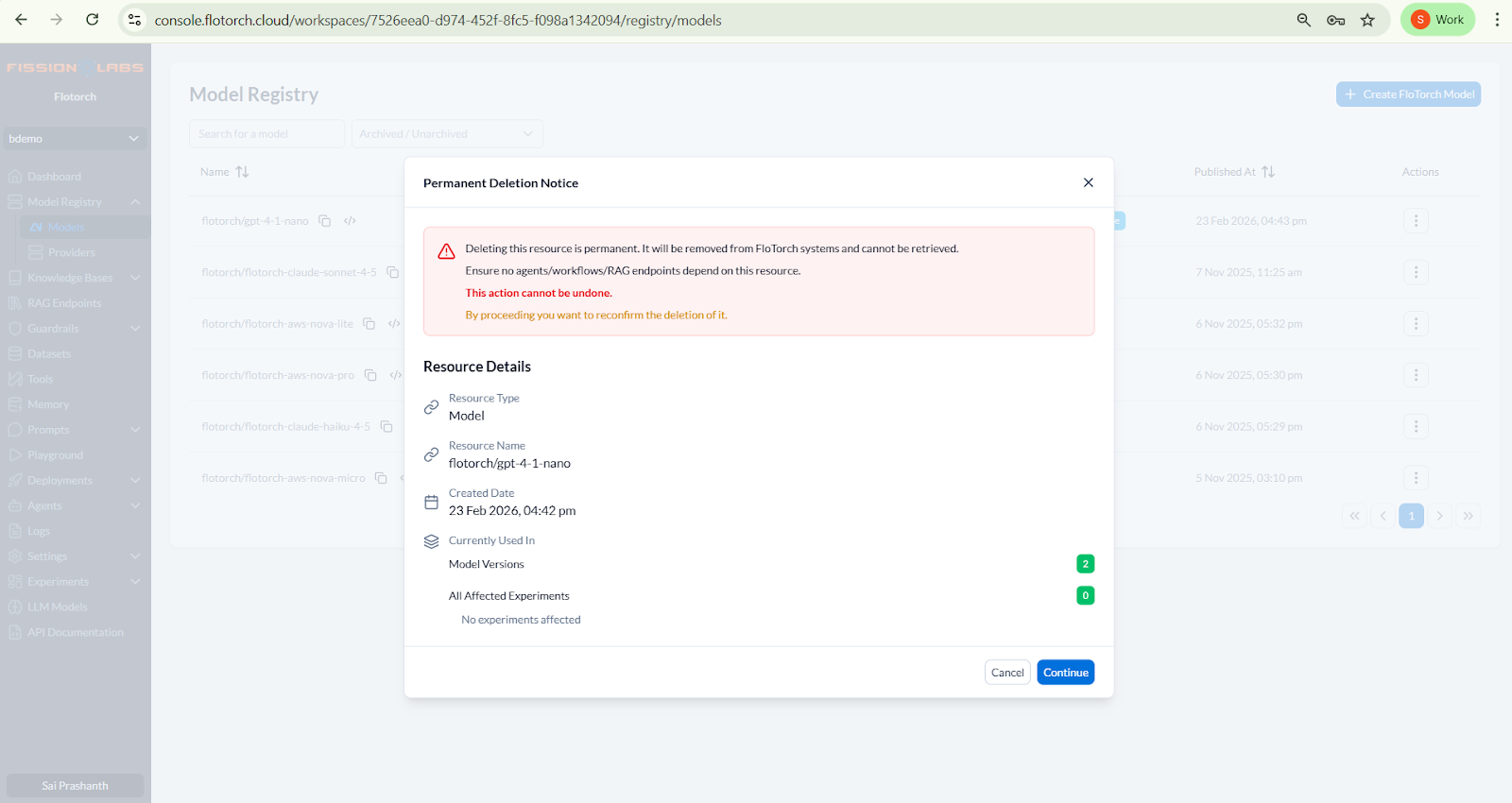

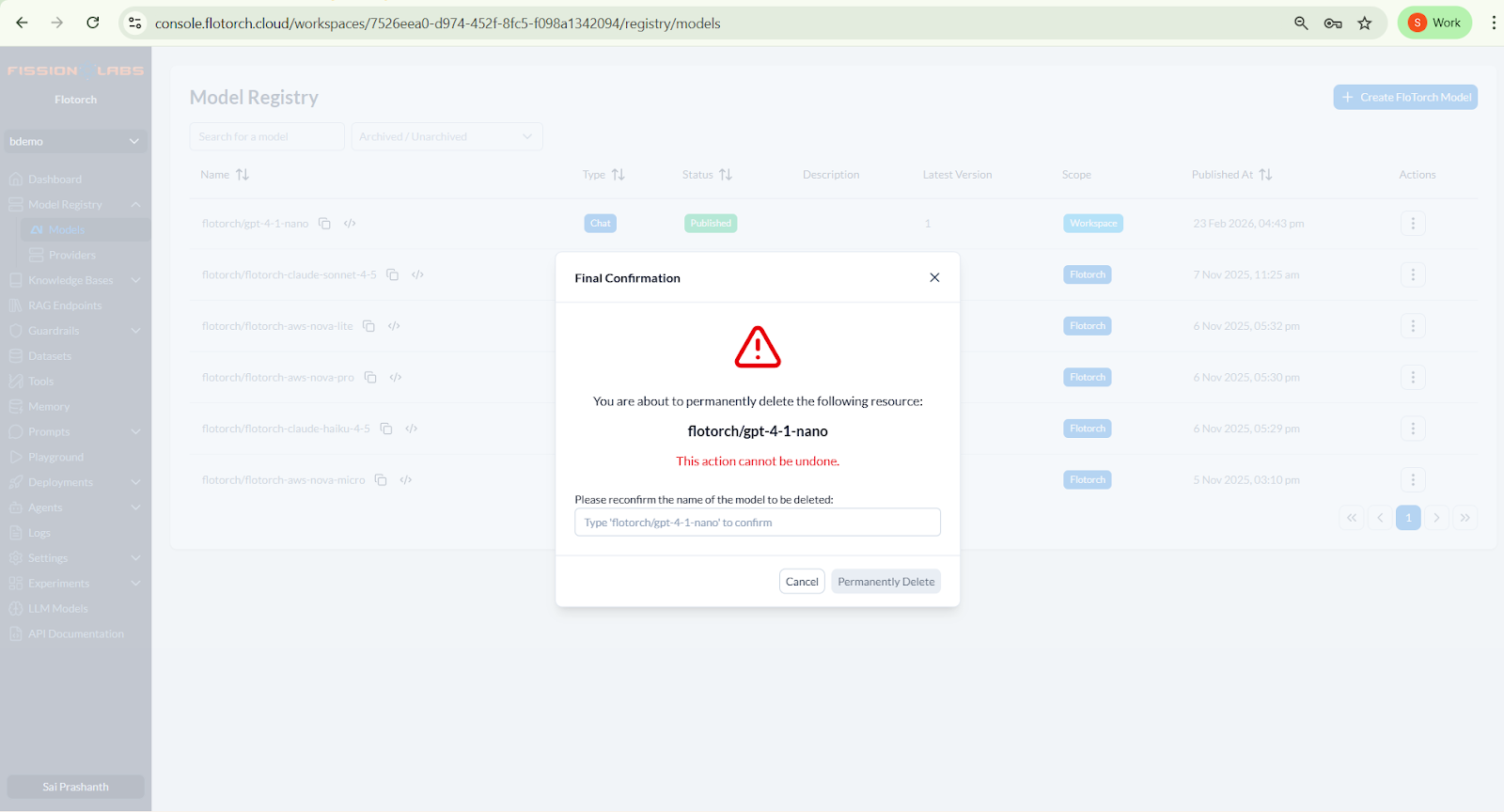

Delete and archive functionality is now available at the workspace level across core entities, including Models, Providers, Knowledge Bases, RAG Endpoints, Guardrails, Agents, Tools, and Memory. This enables teams to safely manage resource lifecycles without breaking dependent workflows.

Permanent deletion requires explicit confirmation and dependency checks, reducing the risk of accidental data loss. Archive states allow teams to phase out unused resources while preserving traceability and configuration history.

Together, structured lifecycle controls and clearly defined access roles ensure controlled access and better resource management across the platform.

For engineering teams running internal copilots or customer-facing AI systems, this introduces governance where it matters most.

Cost Visibility as an Operational Control

As model routing grows more dynamic, cost becomes less predictable.

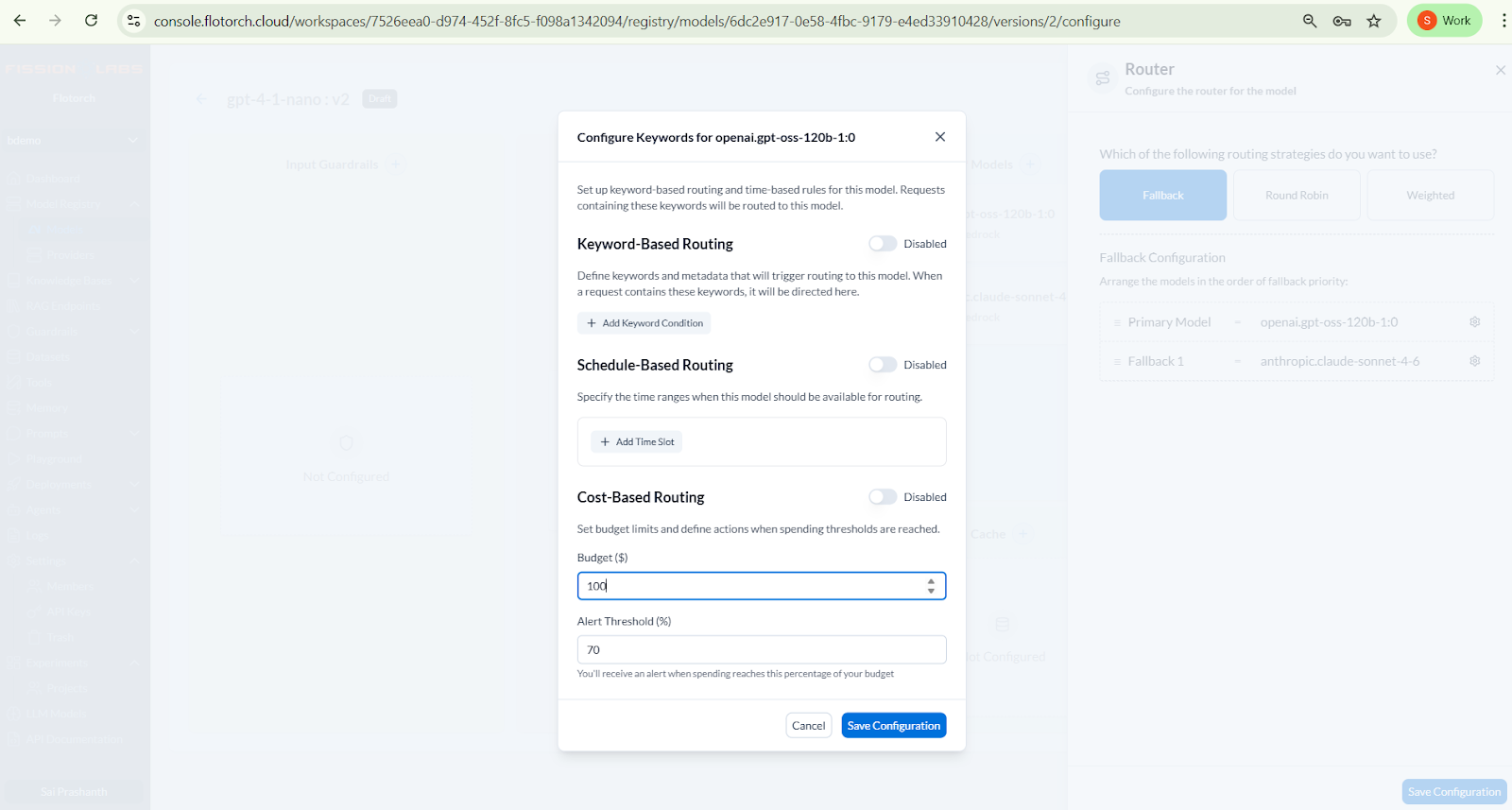

Fallback chains, evaluation runs, and multi-agent pipelines generate usage patterns that are difficult to estimate in advance. FloTorch introduces Cost-Based Routing to address this at the workspace level.

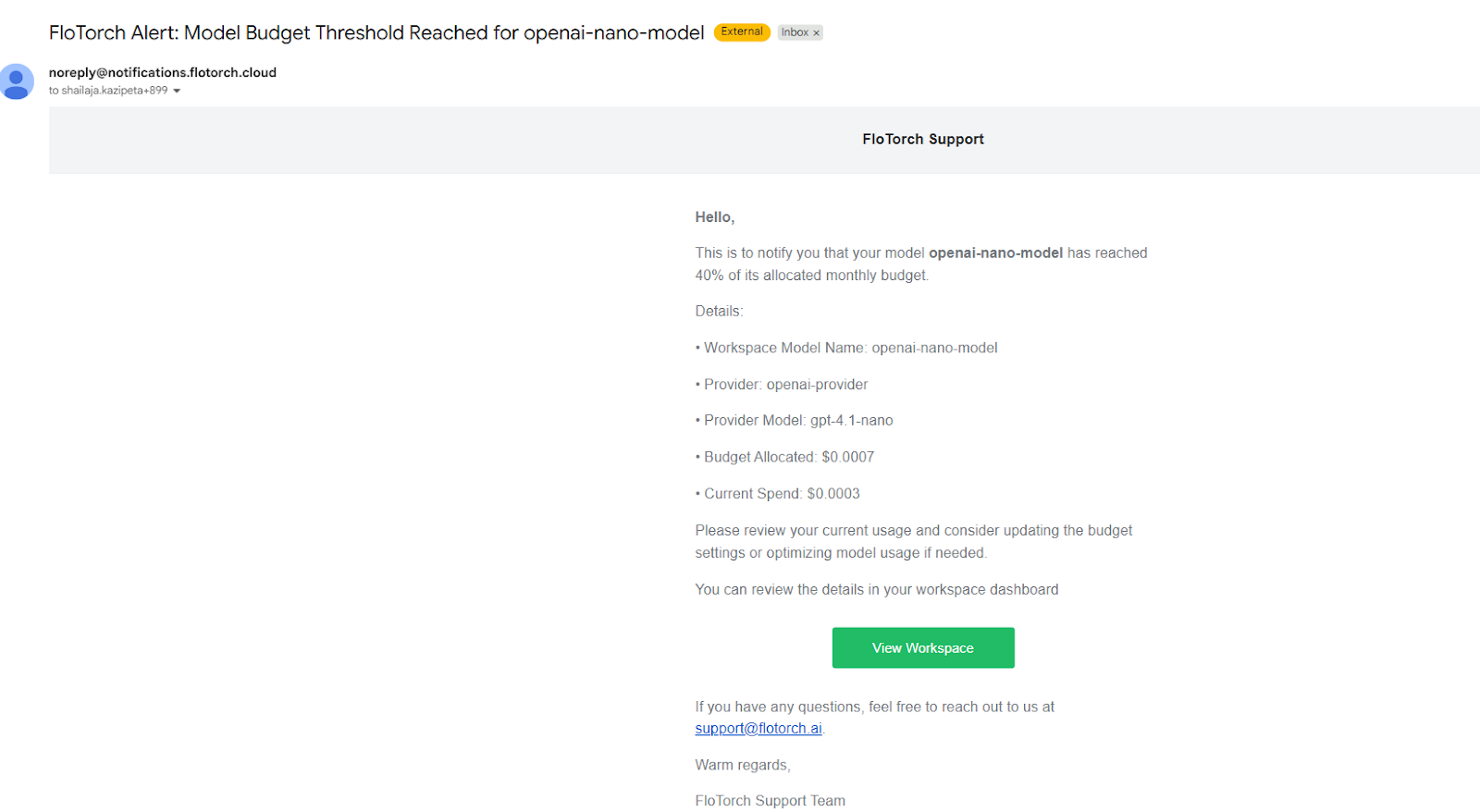

Workspace admins can define budget limits for individual models and configure alert thresholds based on the percentage of spend. When usage crosses a defined threshold or fully exhausts the allocated budget, notifications are triggered automatically.

This applies across all models available within FloTorch, allowing teams to manage costs consistently, regardless of the provider.

The system sends real-time alerts when spending approaches defined limits, enabling teams to review usage, adjust routing strategies, or update budgets before unexpected overruns occur.

Cost is no longer something reviewed after the fact. It becomes an operational control that directly influences how AI systems are configured, scaled, and maintained in production.

Blueprint Based Agent Workflows

One of the recurring challenges in AI development is turning prototypes into structured systems.

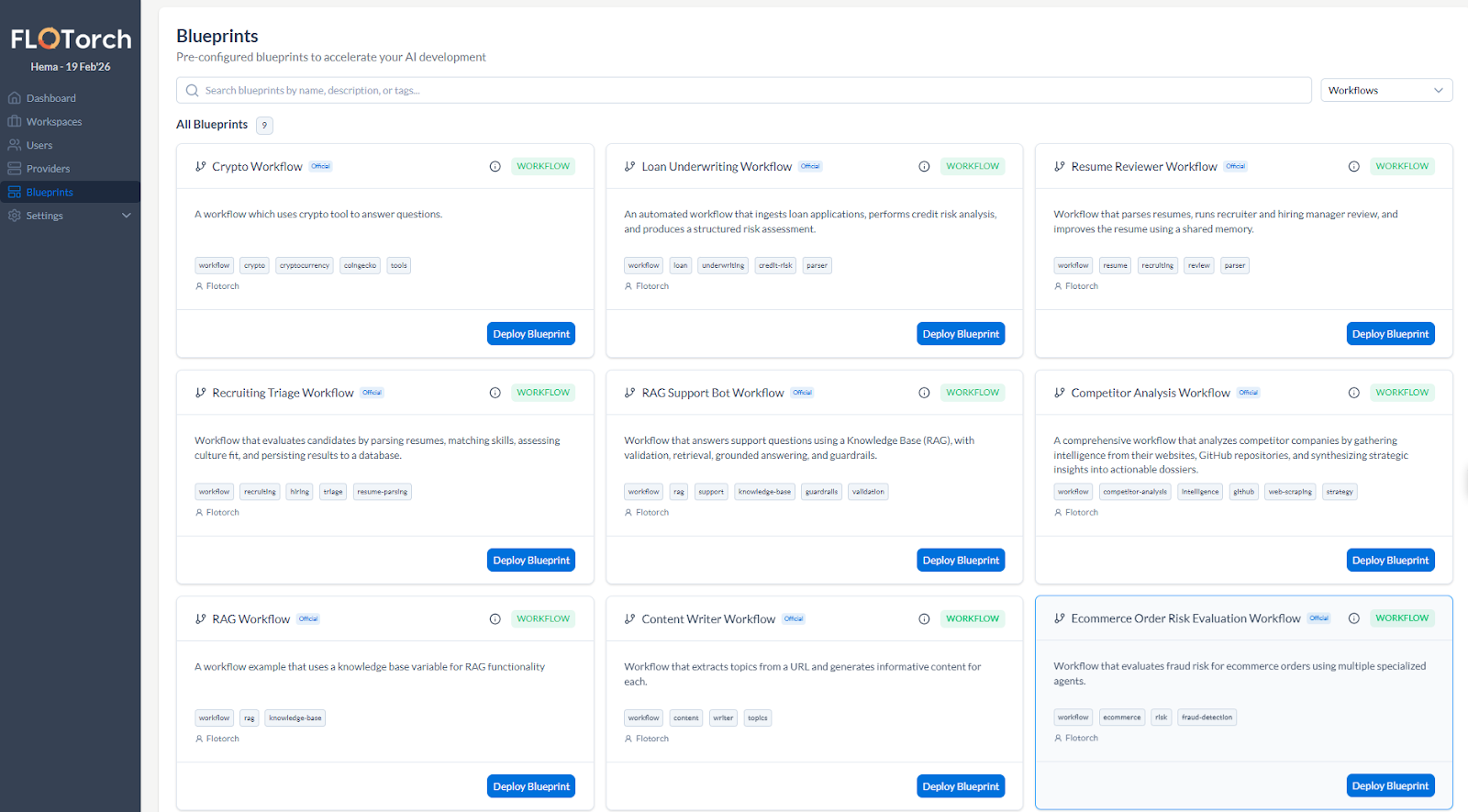

FloTorch expands its blueprint library with production oriented workflows that demonstrate repeatable patterns.

These include workflows for competitor analysis, recruiting triage, knowledge-grounded support bots, resume evaluation, and loan decisioning.

What makes these useful is not the domain itself, but the architecture patterns they represent.

- Parallel agent execution

- RAG pipelines with validation layers

- Structured output handling

- Tool integrations

- Memory backed context management

Instead of starting from an empty canvas, developers can begin with a working orchestration structure and adapt it to their domain. This reduces setup time and helps teams standardize how agents are composed and executed.

Improved Agentic Workflow Configuration and Runtime Clarity

Agent systems become harder to reason about as they grow.

Recent updates improve multi-agent workflow configuration inside the console and provide clearer runtime visibility through logs and performance metrics. Teams can better understand how requests move through their systems.

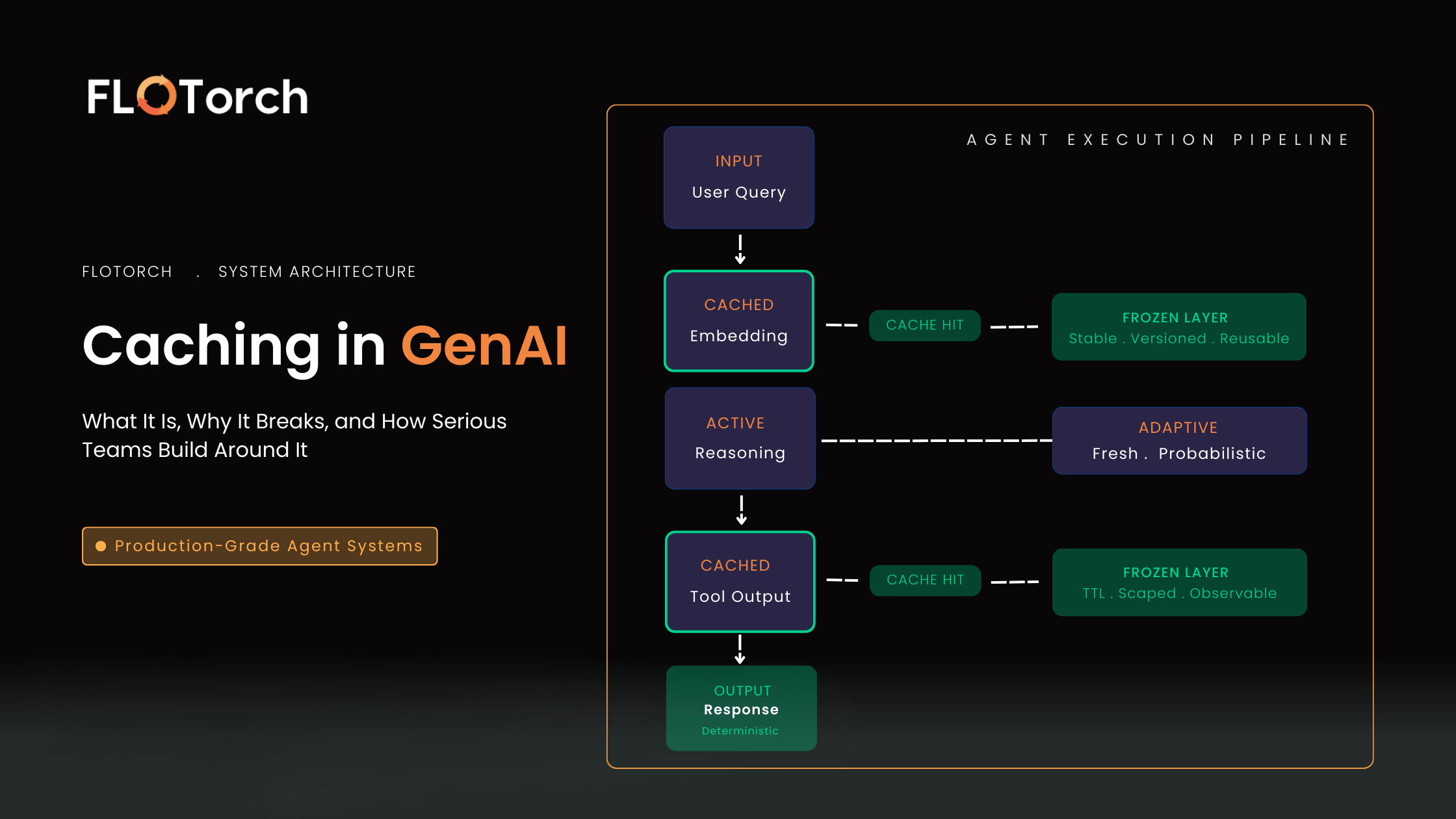

Semantic caching helps eliminate duplicate semantic requests, improving latency while reducing token consumption and inference spend. For knowledge-driven systems such as support bots, this means fewer redundant model calls and more predictable runtime costs.

These improvements may appear incremental, but they directly affect daily development, debugging workflows, and production efficiency.

Evaluation and Dataset Maturity

As AI systems move into production, evaluation becomes a continuous process rather than a one-time experiment. The difficulty is not just scoring outputs. It is building and maintaining high quality datasets that reflect real usage.

FloTorch expands the platform’s dataset management capabilities to support this shift.

Teams can now generate evaluation datasets in multiple ways:

- Upload PDF files to automatically generate synthetic datasets

- Capture real model traces and convert them into structured evaluation data

- Import standard datasets directly from Hugging Face

- Manually curate evaluation samples when needed

Trace capture is particularly practical. Instead of designing evaluation datasets in isolation, teams can convert real model interactions into reusable evaluation inputs through auto-capture. This creates a feedback loop between production traffic and validation workflows.

Synthetic dataset creation from documents lowers the barrier for validating retrieval systems or domain-specific prompts without manually constructing large datasets.

Evaluation, trace capture, and benchmarking become part of the system lifecycle rather than separate exercises.

A Step Toward Operational Maturity

Across recent releases, FloTorch has focused on strengthening the foundations required to operate AI systems responsibly.

- Governance boundaries

- Workspace level cost awareness

- Reusable orchestration patterns

- Structured dataset and evaluation workflows

Production AI requires more than model access. It requires control, visibility, and repeatable validation.

As AI systems grow more complex, reliability depends on how well teams manage permissions, cost, orchestration, and evaluation together. That is the direction these updates support.

Start Building with FloTorch

If you are designing multi-agent systems, RAG pipelines, or governed AI workflows, the fastest way to evaluate a platform is to use it.

Create a workspace, configure your models, and deploy your first structured workflow.

Get Started with FloTorch: https://console.flotorch.cloud/auth/signup?provider=flotorch.

- User Manual: https://docs.flotorch.cloud/

- API Documentation: https://docs.flotorch.cloud/gateway/api-documentation/

- SDK Guides: https://docs.flotorch.cloud/sdk/

- ADK Guides: https://docs.flotorch.cloud/adk/

Build something real. Measure it. Operate it with confidence.

.png)

.svg)

.png)