What's New in FloTorch: Smarter Evaluations, Prompt-Driven Blueprints, and Full Agent Observability

April was a significant month for FloTorch. Across several updates shipped this month, the platform moved meaningfully forward on three fronts that matter most to teams building production AI systems: observability, workflow automation, and agent evaluation. Here's a look at what's new.

Observability You Can Actually Act On

One of the most consistent themes this month has been a significant upgrade to how teams monitor and debug their AI workflows.

Early in April, FloTorch introduced enhanced logs and traces with advanced filtering by operations, resources, and execution IDs — along with real-time visibility into action-level logs across the Playground and workflow execution screens. A persistent issue with trace visibility beyond 90 days was also resolved, giving teams reliable access to historical runs.

Later in the month, evaluation-level log grouping was added. All evaluation runs are now grouped by a common execution ID, making it straightforward to track an entire evaluation workflow end-to-end while still being able to drill into individual run details. For teams running multi-step evaluations across agents, this dramatically reduces the time spent correlating logs and identifying root causes.

The result: less guesswork, faster debugging, and a clearer picture of what your agents are actually doing in production.

Blueprints Built from Natural Language

Blueprint Generation has moved from a promising first step to a genuinely production-capable capability over the course of this month.

The month opened with the introduction of prompt-based blueprint creation, allowing users to describe what they need in plain language and receive a structured blueprint in return. It lowered the barrier for teams who wanted to standardize their evaluation setup without manual configuration. Eight ready-to-use evaluation blueprints were also introduced, covering LLM, RAG, agent workflow, and prompt evaluation patterns — useful starting points that reduce setup time and promote consistency.

By mid-April, this capability had evolved into something more powerful. Users can now describe workflow requirements in natural language and receive complete, deployment-ready blueprints that can be pushed directly into workspaces as executable workflows. The gap between "idea" and "running agent" has narrowed considerably — no manual assembly required.

Agent Evaluation Gets a Full Picture

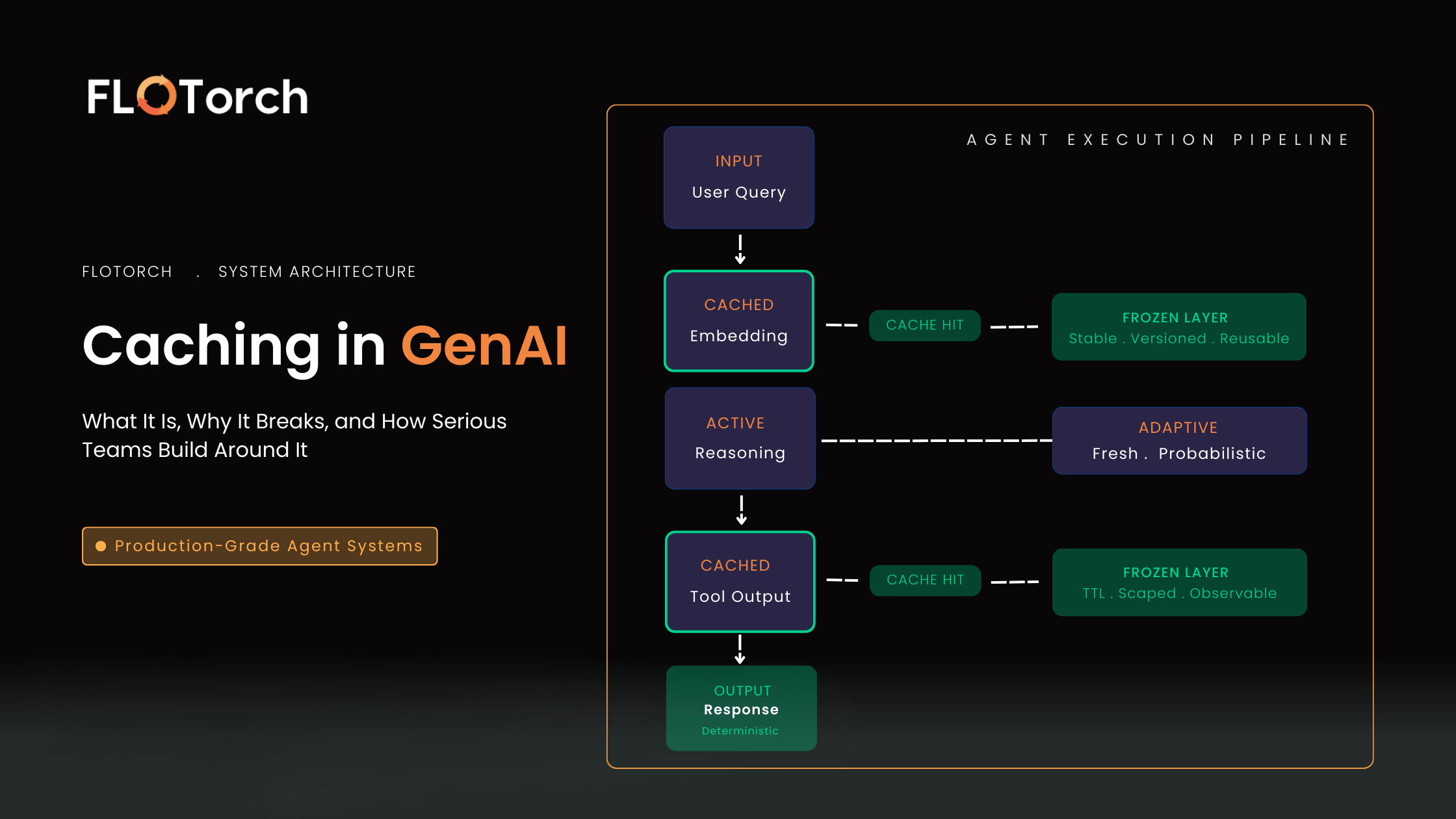

The standout addition at the end of April is Agent & Workflow Tool Call Evaluation — and it addresses one of the more persistent blind spots in agentic AI: understanding not just what an agent produced, but how it got there.

This evaluation framework combines LLM-based judgment with structured performance metrics to assess tool selection accuracy, argument correctness, task success, execution efficiency, and reasoning quality — all within the context of real workflows. Key metrics tracked include latency, token usage, and cost alongside qualitative assessment of planning and tool interaction.

For teams building agents that interact with external tools — search, databases, APIs, code execution — this provides the kind of end-to-end visibility that production deployments require. You can benchmark agents over time, identify exactly where failures occur (in planning, execution, or tool use), and continuously improve performance with confidence.

Expanded Integrations and Developer Tooling

April also brought meaningful progress on the integrations front. FloTorch now supports the Anthropic Messages API for Claude Code compatibility, and has expanded external integration support to include OpenCode, VS Code extensions, and Amazon Q. This gives development teams more flexibility in how they interact with the platform — whether through the FloTorch UI, the SDK, or directly within their existing development environment.

Multi-turn dataset support for agent evaluations was also added, enabling more realistic testing of conversational and task-oriented agents that operate across multiple turns.

A Milestone: Feature Complete

April's final release marks an important moment for the platform. FloTorch is now feature complete — meaning the core capability set is in place. Going forward, updates will focus on stability, reliability, and targeted enhancements based on user feedback.

This is the right moment to explore FloTorch if you've been evaluating options for your GenAI stack. The foundation is solid, the evaluation tooling is comprehensive, and the platform is designed to grow with production workloads — not just experiments.

Get Started

FloTorch is available on AWS Marketplace and GitHub.

Full documentation: docs.flotorch.cloud

Questions or feedback? Reach the team at support@flotorch.ai

.svg)

.png)